Overview

In light of the rapid development and implementation of systems such as automatic face recognition (AFR) technology (Furnell & Clarke, 2014), speech recognition technology (Savchenko & Savchenko, 2021), and behavioural biometric identification such as how people use a computer mouse (Siddiqui et al., 2022), research must come to understand the flaws and biases in these systems.

This scoping review aimed to identify the types of bias in AI-biometric systems, steps being taken to mitigate these biases, how effective these steps are, and to identify any gaps in the literature.

Database searches were conducted on WebofScience and PsychInfo. In total, the searches identified 80 papers and a further 10 found through scanning the selected articles for relevant references. After title/abstract review 28 papers were read in full and 23 identified as fitting the criteria for this review. From the 23 selected papers four main themes emerged: racial bias, age bias, gender bias, and solutions.

Despite some searches including the terms “disability” and “sexuality” no papers were found to fit the inclusion criteria. The implications of systems having demographic biases include risk of discrimination that potentially breaks equality laws across the world (Wang & Deng, 2019). Research proposed solutions for mitigating bias yet there did not appear to be a cohesive or interdisciplinary approach, meaning that even solutions effective in one context might not generalise more widely, thus potentially limiting their usefulness. More cross-discipline research is needed to assess and mitigate the biases within AI-biometric systems; ideally before these systems are applied throughout society.

The aim of this project is to learn about bias in emerging biometrics, who these biases primarily impact, where the bias in the systems is most prevalent, as well as any steps forward to mitigate such biases. The review identifies where there are gaps in the research literature in terms of biases that exist yet the risk these pose has not been sufficiently researched. The report provides an overview of the current state of bias in biometrics. As such, this review aims to address four main research questions:

- To identify the different types of bias in emerging biometrics systems

- To look at the effectiveness of actions to mitigate bias in emerging biometrics

- To suggest next steps in mitigating bias in emerging biometrics based on current research

- To identify gaps in current research looking at bias in emerging biometrics

Read more

Al-Daraiseh, A., Al Omari, D., Al Hamid, H., Hamad, N., & Althemali, R. (2015). Effectiveness of Iphone’s Touch ID: KSA Case Study. International Journal Of Advanced Computer Science And Applications, 6(1). https://doi.org/10.14569/ijacsa.2015.060122

Alshareef, N., Yuan, X., Roy, K., & Atay, M. (2021). A Study of Gender Bias in Face Presentation Attack and Its Mitigation. Future Internet, 13(9), 234. https://doi.org/10.3390/fi13090234

Alwawi, B., & Althabhawee, A. (2022). Towards more accurate and efficient human iris recognition model using deep learning technology. TELKOMNIKA (Telecommunication Computing Electronics And Control), 20(4), 817. https://doi.org/10.12928/telkomnika.v20i4.23759

Angwin, J., Larson, J., Mattu, S., & Kirchner, L. (2016). Machine Bias. ProPublica. Retrieved 12 September 2022, from https://www.propublica.org/article/machine-bias-risk-assessments-in-criminal-sentencing.

Bacchini, F., & Lorusso, L. (2019). Race, again: How face recognition technology reinforces racial discrimination. Journal of Information, Communication and Ethics in Society, 17(3), 321–335. https://doi.org/10.1108/jices-05-2018-0050

Bellamy, B., Mason, J., & Ellis, M. (1999). Photograph Signatures for the Protection of Identification Documents. Cryptography And Coding, 119-128. https://doi.org/10.1007/3-540-46665-7_13

Bromberg, D., Charbonneau, É., & Smith, A. (2020). Public support for facial recognition via police body-worn cameras: Findings from a list experiment. Government Information Quarterly, 37(1), 101415. https://doi.org/10.1016/j.giq.2019.101415

Buhrmester, V., Münch, D., & Arens, M. (2021). Analysis of Explainers of Black Box Deep Neural Networks for Computer Vision: A Survey. Machine Learning And Knowledge Extraction, 3(4), 966-989. https://doi.org/10.3390/make3040048

Buolamwini, J,; Gebru, T. (2018). Gender shades: Intersectional accuracy disparities in commercial gender classification. Proc. Mach. Learn. Conf. Fairness Account. Trans. 81, 1–15.

Dressel, J., & Farid, H. (2018). The accuracy, fairness, and limits of predicting recidivism. Science Advances, 4(1). https://doi.org/10.1126/sciadv.aao5580

Drozdowski, P., Rathgeb, C., Dantcheva, A., Damer, N., & Busch, C. (2020). Demographic Bias in Biometrics: A Survey on an Emerging Challenge. IEEE Transactions On Technology And Society, 1(2), 89-103. https://doi.org/10.1109/tts.2020.2992344Engler, A. (2022).

Engler, A. (2022). The EEOC wants to make AI hiring fairer for people with disabilities. Brookings. Retrieved 12 October 2022, from https://www.brookings.edu/blog/techtank/2022/05/26/the-eeoc-wants-to-make-ai-hiring-fairer-for-people-with-disabilities/.

Galterio, M., Shavit, S., & Hayajneh, T. (2018). A Review of Facial Biometrics Security for Smart Devices. Computers, 7(3), 37. https://doi.org/10.3390/computers7030037

Gong, S., Liu, X., & Jain, A. K. (2020). Jointly de-biasing face recognition and demographic attribute estimation. In European conference on computer vision, 330-347. Springer, Cham.

Goode, A. (2014). Bring your own finger – how mobile is bringing biometrics to consumers. Biometric Technology Today, 2014(5), 5-9. https://doi.org/10.1016/s0969-4765(14)70088-8

Jain, A., & Kumar, A. (2012). Biometric Recognition: An Overview. The International Library Of Ethics, Law And Technology, 11, 49-79. https://doi.org/10.1007/978-94-007-3892-8_3

Jasserand, C. (2015). Avoiding terminological confusion between the notions of ‘biometrics’ and ‘biometric data’: an investigation into the meanings of the terms from a European data protection and a scientific perspective. International Data Privacy Law, 6(1), 63-76. https://doi.org/10.1093/idpl/ipv020

Kahn, J. (2021). Why HireVue will no longer assess job seekers' facial expressions. Fortune. Retrieved 12 September 2022, from https://fortune.com/2021/01/19/hirevue-drops-facial-monitoring-amid-a-i-algorithm-audit/.

Khiyari, H., & Wechsler, H. (2016). Face Verification Subject to Varying (Age, Ethnicity, and Gender) Demographics Using Deep Learning. Journal Of Biometrics & Biostatistics, 07(04). https://doi.org/10.4172/2155-6180.1000323

Kloppenburg, S., & van der Ploeg, I. (2018). Securing Identities: Biometric Technologies and the Enactment of Human Bodily Differences. Science As Culture, 29(1), 57-76. https://doi.org/10.1080/09505431.2018.1519534

Liang, J., Cao, Y., Zhang, C., Chang, S., Bai, K., & Xu, Z. (2019). Additive adversarial learning for unbiased authentication. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 11428-11437).

Marsico, M., Nappi, M., Riccio, D., & Wechsler, H. (2016). Leveraging implicit demographic information for face recognition using a multi-expert system. Multimedia Tools And Applications, 76(22), 23383-23411. https://doi.org/10.1007/s11042-016-4085-8

Martinez-Martin, N., Greely, H. T., & Cho, M. K. (2021). Ethical development of digital phenotyping tools for mental health applications: Delphi study. JMIR mHealth and uHealth, 9(7), e27343.

Muhammad, J., Wang, Y., Wang, C., Zhang, K., & Sun, Z. (2021). CASIA-Face-Africa: A Large-Scale African Face Image Database. IEEE Transactions On Information Forensics And Security, 16, 3634-3646. https://doi.org/10.1109/tifs.2021.3080496

Ortega, A., Fierrez, J., Morales, A., Wang, Z., de la Cruz, M., Alonso, C., & Ribeiro, T. (2021). Symbolic AI for XAI: Evaluating LFIT Inductive Programming for Explaining Biases in Machine Learning. Computers, 10(11), 154. https://doi.org/10.3390/computers10110154

Page, M. J., McKenzie, J.E., Bossuyt, P. M., Boutron, I., Hoffmann, T. C., Mulrow, C. D., ... & Moher, D. (2021). The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. The British Medical Journal, 372, 71. https://doi.org/10.1136/bmj.n71

Reynolds, C., Altmann, R., & Allen, D. (2021). The Problem of Bias in Psychological Assessment. Mastering Modern Psychological Testing, 573-613. https://doi.org/10.1007/978-3-030-59455-8_15

Robinson, J. P., Livitz, G., Henon, Y., Qin, C., Fu, Y., & Timoner, S. (2020). Face recognition: too bias, or not too bias?. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (pp. 0-1).

Röösli, E., Rice, B., & Hernandez-Boussard, T. (2020). Bias at warp speed: how AI may contribute to the disparities gap in the time of COVID-19. Journal Of The American Medical Informatics Association, 28(1), 190-192. https://doi.org/10.1093/jamia/ocaa210

Saini, B., Kaur, N., & Bhatia, K. (2018). Authenticating Mobile Phone User using Keystroke Dynamics. International Journal Of Computer Sciences And Engineering, 6(12), 372-377. https://doi.org/10.26438/ijcse/v6i12.372377

Savchenko, V., & Savchenko, A. (2021). Method for Measuring Distortions in Speech Signals during Transmission over a Communication Channel to a Biometric Identification System. Measurement Techniques, 63(11), 917-925. https://doi.org/10.1007/s11018-021-01864-x

Serna, I., Pena, A., Morales, A., & Fierrez, J. (2021). InsideBias: Measuring bias in deep networks and application to face gender biometrics. In 2020 25th International Conference on Pattern Recognition (ICPR) (pp. 3720-3727). IEEE.

Siddiqui, N., Dave, R., Vanamala, M., & Seliya, N. (2022). Machine and Deep Learning Applications to Mouse Dynamics for Continuous User Authentication. Machine Learning And Knowledge Extraction, 4(2), 502-518. https://doi.org/10.3390/make4020023

Singh, R., Agarwal, A., Singh, M., Nagpal, S., & Vatsa, M. (2020, April). On the robustness of face recognition algorithms against attacks and bias. In Proceedings of the AAAI Conference on Artificial Intelligence (Vol. 34, No. 09, pp. 13583-13589).

Srinivas, N., Hivner, M., Gay, K., Atwal, H., King, M., & Ricanek, K. (2019, January). Exploring automatic face recognition on match performance and gender bias for children. In 2019 IEEE Winter Applications of Computer Vision Workshops (WACVW) (pp. 107-115). IEEE.

Tahir Sabir, A. (2021). Gait-based Biometric Identification System using Triangulated Skeletal Models (TSM). Academic Journal Of Nawroz University, 10(3), 202-208. https://doi.org/10.25007/ajnu.v10n3a1223

Terhörst, P., Huber, M., Kolf, J.N., Damer, N., Kirchbuchner, F., & Kuijper, A. (2019). Multi-algorithmic Fusion for Reliable Age and Gender Estimation from Face Images. 2019 22th International Conference on Information Fusion (FUSION), 1-8.

Terhörst, P., Kolf, J., Damer, N., Kirchbuchner, F., & Kuijper, A. (2020). Post-comparison mitigation of demographic bias in face recognition using fair score normalization. Pattern Recognition Letters, 140, 332-338. https://doi.org/10.1016/j.patrec.2020.11.007

Terhörst, P., Kolf, J. N., Damer, N., Kirchbuchner, F., & Kuijper, A. (2020) b. Face quality estimation and its correlation to demographic and non-demographic bias in face recognition. In 2020 IEEE International Joint Conference on Biometrics (IJCB) (pp. 1-11). IEEE.

Tricco, A., Lillie, E., Zarin, W., O'Brien, K., Colquhoun, H., & Levac, D. et al. (2018). PRISMA Extension for Scoping Reviews (PRISMA-ScR): Checklist and Explanation. Annals Of Internal Medicine, 169(7), 467-473. https://doi.org/10.7326/m18-0850

Vincent, N., & Hecht, B. (2021). Preview of “Data and its (dis)contents: A survey of dataset development and use in machine learning research”. Patterns, 2(11), 100388. https://doi.org/10.1016/j.patter.2021.100388

Wang, M., & Deng, W. (2019). Mitigate bias in face recognition using skewness-aware reinforcement learning. arXiv preprint arXiv:1911.10692.

Wiggers, K. (2021). Bias persists in face detection systems from Amazon, Microsoft, and Google. VentureBeat. Retrieved 12 September 2022, from https://venturebeat.com/ai/bias-persists-in-face-detection-systems-from-amazon-microsoft-and-google/.

Williams, D. (2020). Fitting the description: historical and sociotechnical elements of facial recognition and anti-black surveillance. Journal Of Responsible Innovation, 7(sup1), 74-83. https://doi.org/10.1080/23299460.2020.1831365

Yin, X., Zhu, Y., & Hu, J. (2020). Contactless Fingerprint Recognition Based on Global Minutia Topology and Loose Genetic Algorithm. IEEE Transactions On Information Forensics And Security, 15, 28-41. https://doi.org/10.1109/tifs.2019.2918083

Young-Powell, A. (2021). Ensuring biometrics work for everyone - Raconteur. Raconteur. Retrieved 12 September 2022, from https://www.raconteur.net/hr/diversity-inclusion/ensuring-biometrics-work-for-everyone/.

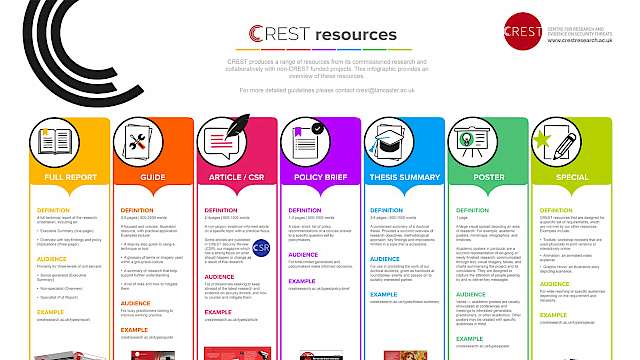

Copyright Information

As part of CREST’s commitment to open access research, this text is available under a Creative Commons BY-NC-SA 4.0 licence. Please refer to our Copyright page for full details.

IMAGE CREDITS: Copyright ©2024 R. Stevens / CREST (CC BY-SA 4.0)